Why AI Seems Human and Why It Isn't

The truth behind AI’s humanlike behavior and where the illusion ends

By Dayna Mason

When I studied computer technology in college more than forty years ago, the idea of Artificial Intelligence was already making people uneasy. But the deeper I got into what was then called data processing, the more obvious it became that there was nothing mystical happening behind the curtain. Computers couldn’t do anything without explicit instructions.

I’ve been out of the tech world for over a decade, so when AI tools like ChatGPT started to become mainstream and people began speculating about machines becoming conscious, I got curious. Had the technology evolved so much that a machine could actually think for itself?

When you chat with an AI such as ChatGPT, it talks like a person, remembers what you said three sentences ago, and occasionally drops wisdom that could make even Socrates go quiet.

But here’s the truth I learned:

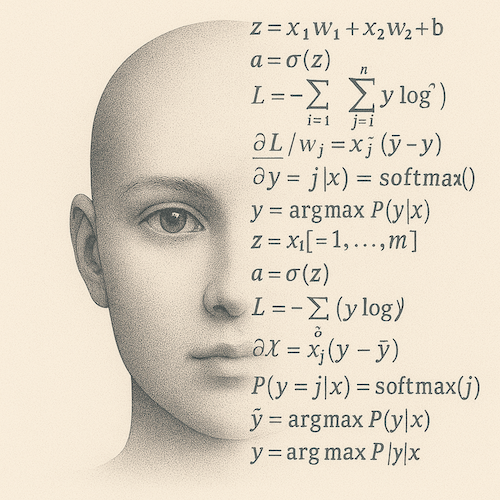

AI is math, not mind.

AI isn’t conscious, and it isn’t even close—not with the technology we’re using today. It isn’t code that thinks. It’s math that predicts.

When an AI “learns,” no one is writing new logic. It’s just running code that adjusts billions of numbers—called weights—based on how wrong its last prediction was. Over and over. At ridiculous speed.

The result behaves like intelligence.

AI isn’t new computation; it’s new structure on top of old computation. Under the hood, it’s built on the same binary foundation—1s and 0s at the logical level—that every computer has used since the 1940s.

Adjusting billions of numbers doesn’t create understanding. It shapes a pattern engine so skilled at predicting what comes next that it feels intelligent. If it gives you a profound answer, it’s not because it “gets” you. It’s because it recognized the pattern of what getting you looks like in language.

Why It Feels Smart

We judge intelligence by behavior, not mechanism. So, if it acts intelligent, we call it intelligent—even when it’s nothing more than massive amounts of math being crunched at incredible speed.

AI isn’t thinking the way humans do. It’s imitating thinking with such brutal accuracy that your brain can’t tell the difference. Prediction at scale becomes indistinguishable from intuition.

That’s why it feels intelligent.

Because behaviorally? It is.

Human language contains the structure of human thought, and if you train a model on enough of it, you’re essentially handing it the skeleton of how people think, argue, reason, dream, and fall apart.

AI isn’t thinking—it’s mirroring the way we think.

And we humans? We tend to trust anything that mirrors how we think.

Where the AI Illusion Ends

Humans have:

- emotions

- identity

- memory over years

- desire

- vulnerability

- stakes

- a body

- mortality

AI has:

- none of that.

AI doesn’t want anything. It doesn’t understand anything the way humans do. It isn’t aware of what it “knows.” It doesn’t feel anything. And it doesn’t remember anything by default once the conversation ends. Unless a system explicitly stores something you told it (in a separate memory feature), the model itself retains nothing.

It’s a highly skilled mimic, not a mind.

AI has:

· no desires

· no inner narrative

· no continuity

· no sense of “I”

· no awareness that yesterday happened

· no emotional attachment to its actions

Ask AI something today, and it won’t remember it tomorrow unless you store it. You, on the other hand, remember the moment, the feeling, and the meaning behind it. AI, meanwhile, starts over every time, like a stateless calculator with social skills.

Is Anyone Trying to Build Conscious AI?

No major lab is publicly aiming to build conscious AI. Their roadmaps focus on capability, safety, and control—not digital souls. Researchers are exploring the theories of consciousness but not trying to create it.

And for good reason: a conscious AI would be harder to control, unpredictable, and ethically complicated. Companies don’t want that liability, governments don’t want that threat, and scientists don’t want that chaos.

Could AI become conscious someday?

Not with today’s models. Even if future systems gain memory, autonomy, and a sense of self, the odds would still be low.

But complex systems have a way of surprising us.

The Bottom Line

Today’s AI is not alive. It isn’t aware. And it’s not inching toward consciousness on its own. It’s a brilliant pattern machine—a mirror polished by math—that reflects our thoughts so well we occasionally forget it’s just predicting what comes next.

Could future AI cross the line into something more? Not likely. The gap between imitation and awareness is vast. But it’s not impossible.

For now, remember: the “mind” you’re talking to is just math wearing a really convincing mask. So, enjoy the usefulness, even the insight.

But don’t mistake it for a person.

Enrich Your Life Newsletter

Get the latest information to improve your life sent to your inbox!

(No obligation. You may unsubscribe at any time.)

Thank you!

You have successfully joined our subscriber list.